Post Outline

- Why IEX?

- Why Parquet?

- System Outline

- Code

- Links

WHY IEX?

IEX is a relatively new exchange (founded in 2012). For our purposes, what makes them different from other exchanges is they provide a robust FREE API to query their stock exchange data. As a result we can leverage the pandas-datareader framework to query IEX data quite simply.

WHY PARQUET?

I don't use Hadoop, however Parquet is a great storage format within the pandas ecosystem as well. It is fast, stable, flexible, and comes with easy compression builtin. I originally learned about the format when some of my datasets were too large to fit in-memory and I started to use Dask as a drop-in replacement for Pandas. It blows away CSV's and I found it more stable and consistent than HDF5 files.

SYSTEM OUTLINE

This system will query and store ~630 ETF symbol quotes every 30 seconds during market hours. To view the project setup visit the Github Repo. First we start by outlining the system process.

- The system starts with the iex_downloader.py script. This script will;

- instantiate the logger,

- get today's market hours and date

- handle timezone conversions to confirm the script is only running during market hours

- if market hours it will query the IEX API format the data and write the data to an interim data storage location

- if not market hours no data is queried and a warning is issued.

- The second component is the iex_downloader_utils.py script. This script provides utility functions to format the response data and store it properly.

- The third component is the iex_eod_processor.py script. This script's tasks are:

- to run after the end of the market session

- read the single day's worth of intraday data collected, as a Pandas dataframe (if dataset is too big for memory can switch to Dask)

- drop any duplicates or NaN rows.

- store into a final `processed` data folder as a single compressed file containing one day's worth of compressed intraday quote data.

- delete the day's data stored `interim` folder to manage hard disk memory.

- The final component is the task scheduler. In Linux this is carried out using the `crontab` application. For Windows/Mac systems you will have to adapt the logic to your specific OS. In the `./src/data/iex_cronjob.txt` file I give a template of the tasks that need to be scheduled. These tasks are:

- Every minute, between 7am-2pm Mountain Time, Monday through Friday run the iex_downloader.py script.

- Every minute, wait 30 secs, between 7am-2pm Mountain Time, Monday through Friday run the iex_downloader.py script. Note that crontab doesn't have resolution less than a minute so we can overcome that by using a timed delay and repeating a task.

- 10 minutes after 2pm Mountain Time, Monday through Friday run the iex_eod_processor.py script.

CODE

First the iex_downloader.py script.

Next the iex_downloader_utils.py script.

Next the iex_eod_processor.py script.

Finally the cronjob task template.* 7-14 * * mon-fri /YOUR/DIRECTORY/anaconda3/envs/iex_downloader_env/bin/python3.6 '/YOUR/DIRECTORY/iex_intraday_equity_downloader/src/data/iex_downloader.py' >> /YOUR/DIRECTORY/iex_intraday_equity_downloader/logs/equity_downloader_logs/iex_downloader_log.log * 7-14 * * mon-fri sleep 30; /YOUR/DIRECTORY/anaconda3/envs/iex_downloader_env/bin/python3.6 '/YOUR/DIRECTORY/iex_intraday_equity_downloader/src/data/iex_downloader.py' >> /YOUR/DIRECTORY/iex_intraday_equity_downloader/logs/equity_downloader_logs/iex_downloader_log.log 10 14 * * mon-fri /YOUR/DIRECTORY/anaconda3/envs/iex_downloader_env/bin/python3.6 '/YOUR/DIRECTORY/iex_intraday_equity_downloader/src/data/iex_eod_processor.py' >> /YOUR/DIRECTORY/iex_intraday_equity_downloader/logs/equity_downloader_logs/iex_downloader_log.log

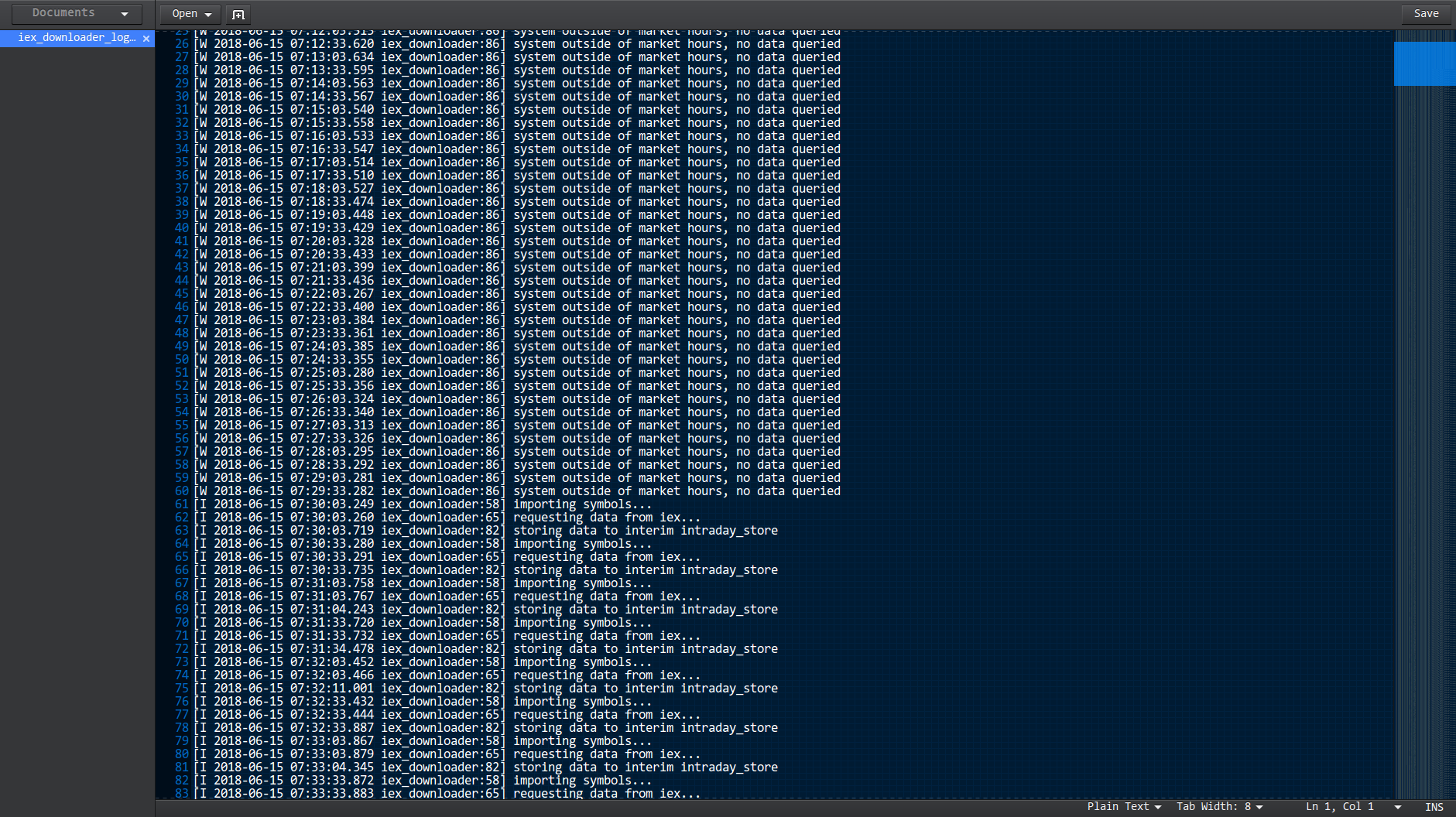

When everything is running correctly you should see an example log file that looks like the image below.

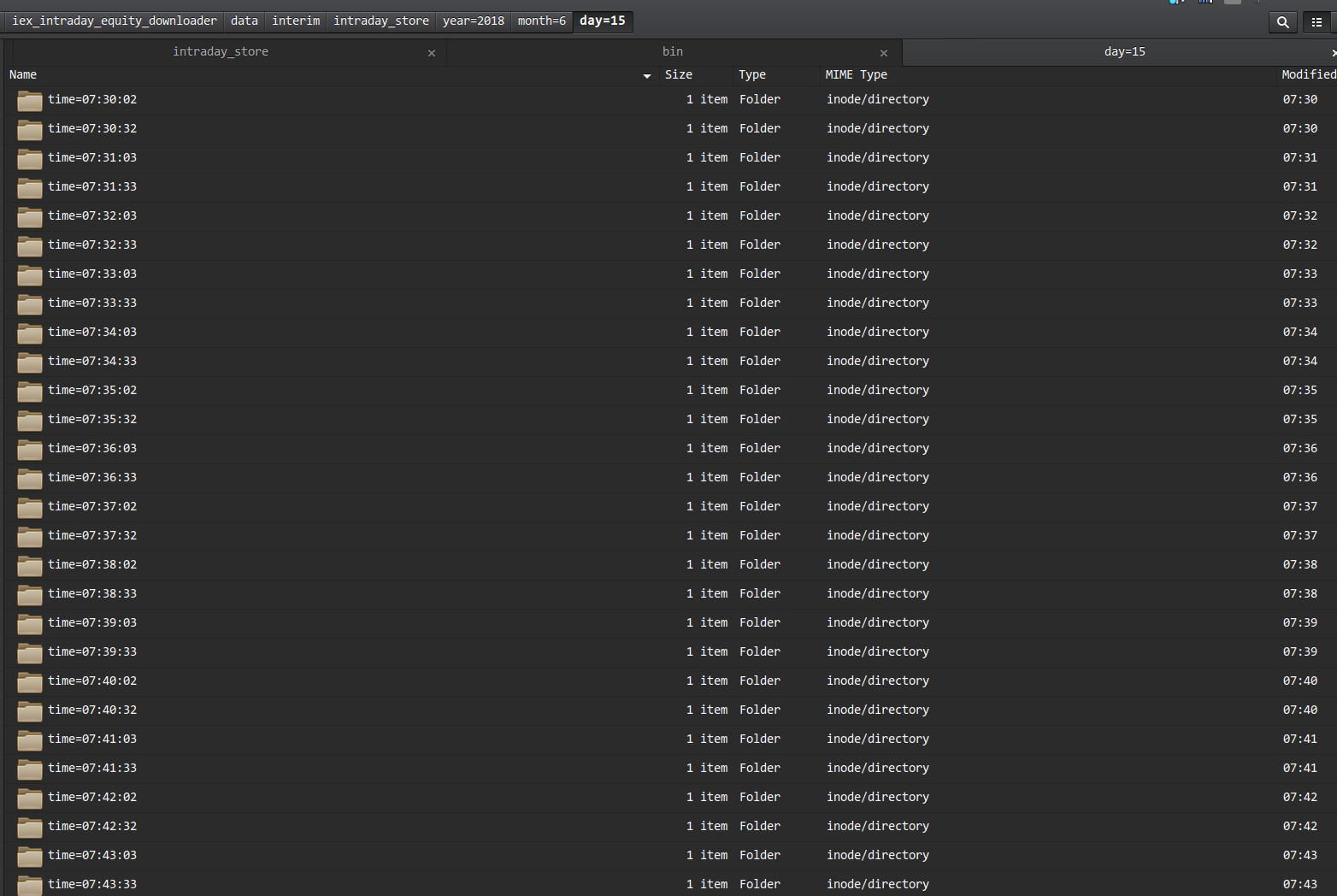

The interim folder system will look something like the below image.

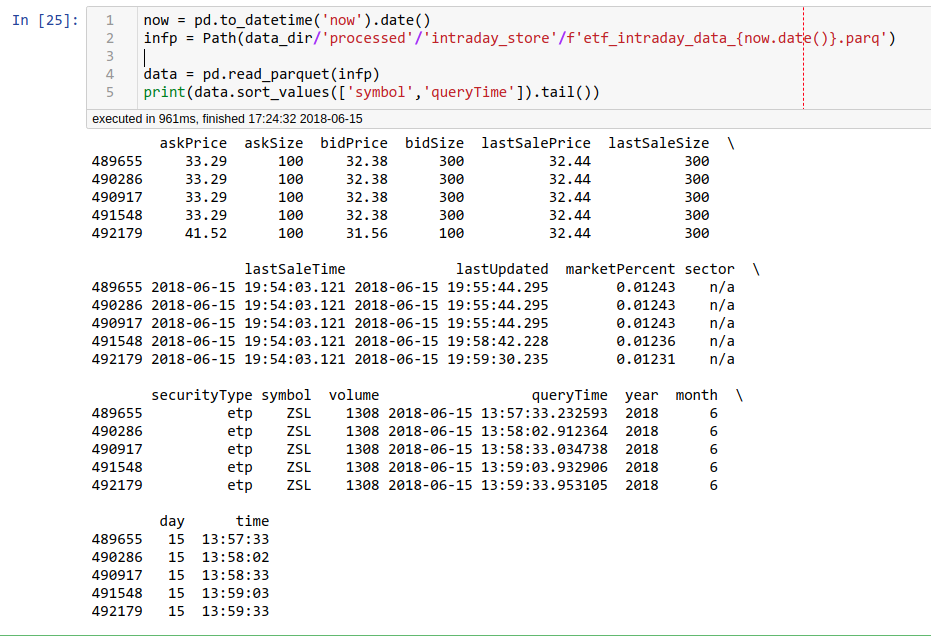

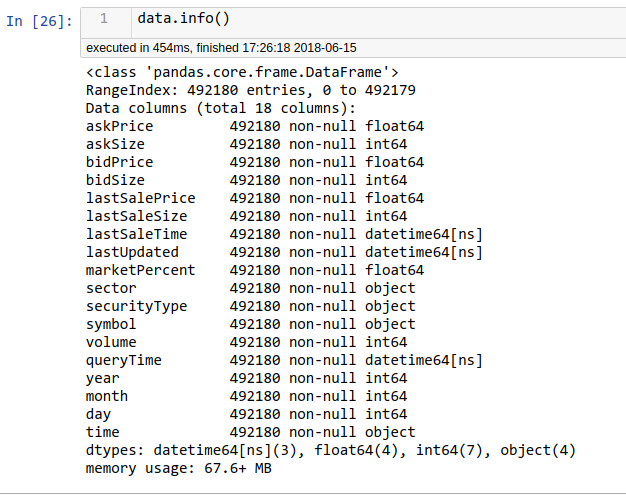

After the market closes and the eod processor script runs we can import the final dataset into a Jupyter notebook easily.

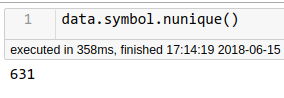

How many unique symbols?